And then we describe who consumes the mysterious We first describe how ColumnarBatch is filled So, now we know what variable is returned in the iterator. GetCurrentKey always returns null and is implemented in theīase class of SpecificParquetRecordReaderBase. With data and this variable is returned in getCurrentValue function. The nextBatch function fills (how? we tell later) columnarBatch The batch mode ( returnColumnarBatch is set to true). If columnarBatch is already initialized, which it is, see stepģ.ii), and then to nextBatch(). The function first calls resultBatch (which becomes no-op, It what what is called when the iterator wrapper from the Step 2 isĬonsumed (where?, we tell later). VectorizedParquetRecordReader demands more attention, because Step 4: Implementation of the RecordReader interface in We discuss this class in more detail later. Step 3(ii): initBatch mainly allocates columnarBatch object. This is stored in the totalRowCount variable. We know how many rows are there in the InputFileSplit. Type ParquetFileReader is allocated in the base class.īy the end of the SpecificParquetRecordReaderBase.initialize(.) Requested schema is inferred, and a reader of Step 3(i): initialize calls the initialize function of the super class InitBatch(partitionSchema, file.partitionValues) functions are called. Initialize(split, hadoopAttemptContext) and Object allocation VectorizedParquetRecordReader, This is somewhat based on parquet-mr’s ColumnReader “. InternalRows or ColumnarBatches directly using the Parquet column APIs. Step 3: What does VectorizedParquetRecordReader do? According to theĬomment in the file,” A specialized RecordReader that reads into (and its base class SpecificParquetRecordReaderBase). (we discuss later how this iterator is consumed, step 4)Īround Hadoop style RecordReader, which is implemented

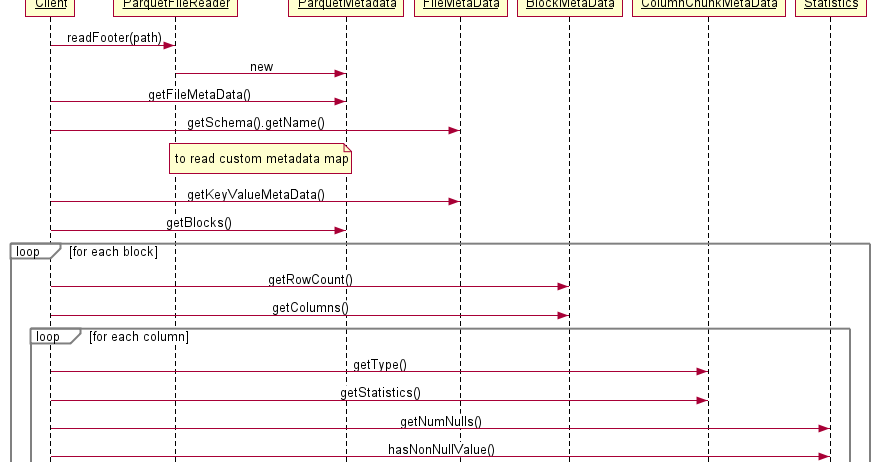

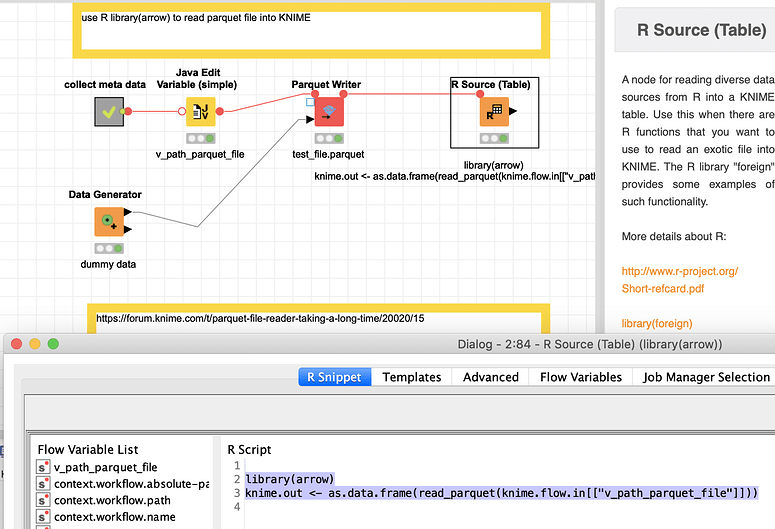

The RecordReaderIterator class wraps a Scala iterator Here parquetReader is of type VectorizedParquetRecordReader. Val iter = new RecordReaderIterator ( parquetReader ) Step 2: ParquetFileFormat has a buildReader function that returns a Whole-stage-code-generation path with the batch reading support. Various input file formats are implemented this way. I am not entirely clear how does this happen, but it makes sense. Step 1: So for reading a data source, we look into DataSourceScanExec class.įrom here, the code somehow ends up in the ParquetFileFormat class. You can find them having Exec as a suffix in their name. In Spark SQL, various operations are implemented in their respectiveĬlasses. This detail is important because it dictates how WSCG is done. The schema for intWithPayload.parquet file is. In this example, I am trying to read a file which was generated by the The Whole Stage Code Generation (WSCG) on. This commentary is made on the 2.1 version of the source code, with Note: This blog post is work in progress with its content, accuracy, parquet ( "intWithPayload.parquet" )Īs documented in the Spark SQL programming While (null != (pages = r.Val parquetFileDF = spark. ParquetFileReader r = new ParquetFileReader(conf, path, readFooter) MessageType schema = readFooter.getFileMetaData().getSchema() ParquetMetadata readFooter = ParquetFileReader.readFooter(conf, path, ParquetMetadataConverter.NO_FILTER) String PATH_SCHEMA = “s3://” + object.getBucketName() + “/” + object.getKey() Ĭonfiguration conf = new Configuration() Ĭonf.set(“fs.s3.awsAccessKeyId”, credentials.accessKeyId) Ĭonf.set(“fs.s3.awsSecretAccessKey”, cretKey) : .3Service.(Lorg/jets3t/service/security/AWSCredentials ) I am getting the below error when trying to use the above code Package de.jofre.test import java.io.IOException import .Configuration import .Path import .page.PageReadStore import .data.Group import. import .converter.ParquetMetadataConverter import .ParquetFileReader import .metadata.ParquetMetadata import .ColumnIOFactory import .MessageColumnIO import .RecordReader import .MessageType import .Type public class Main Author padmalcom Posted on JCategories Computer Tags BigData, Java, Parquet

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed